The Future of Mind: From Biological Brains to Intelligent Machines - The Complete Picture

Artificial General Intelligence (AGI) is the buzzword of the moment. But what is intelligence, really? How do humans exhibit it, and how does it differ from artificial systems—or even from the adaptive intelligence found in cells, ecosystems, or potentially extraterrestrial life? To unravel these questions, we must explore intelligence not as a single concept but as a spectrum, stretching from biological cognition to artificial systems and beyond.

You might wonder, wouldn’t it be better to first define intelligence? The challenge, as Marvin Minsky once pointed out, is that “intelligence” is a “bag of words”—a term that encompasses diverse abilities across many dimensions. Sometimes it means solving problems, other times self-awareness, emotional aptitude, or the capacity to navigate uncertainty. Biology, for example, excels at creating complex forms in morphogenetic space. Is that intelligence? Undoubtedly. So too is the ability to dream, to think outside the box, and to ask questions like, “What happens if you travel faster than the speed of light?” These forms of intelligence often evade existing benchmarks, yet they are nevertheless significant. Humans leverage intelligence in remarkable ways. It is not easy to separate intelligence from other kind of human capabilities such as creativity, emotion, empathy. Current day trend is to dumb down artificial general intelligence in order to hype it, but as we will see shortly there is a whole range of capabilities that machines do not possess yet.

Rather than confining intelligence to rigid definitions, it is more fruitful to study the systems that manifest it. By understanding their diverse expressions, we can aspire to build artificial systems that replicate their remarkable features. The idea that solving a benchmark equates to achieving AGI is a mirage. Human intelligence flourishes in uncertainty, creativity, and adaptability—qualities that demand exploration far beyond computational metrics. If we truly aim to build entities that parallel human intelligence, we must go beyond benchmarks and venture into the real world, where uncertainty reigns.

This blog post explores the essence of intelligence, contrasting human and artificial systems while examining the role of emotions and the body-mind connection in shaping decision-making. It highlights how AI, despite its rapid progress, struggles with ontological uncertainty due to its lack of embodiment and intuitive reasoning. The discussion paves the way for envisioning next-generation systems designed to overcome these challenges.

Born of Feeling: Emotions as the Blueprint of Human Decision-Making

When testing the limits of intelligence, we often focus on systems designed to measure cognitive performance. Antonio Damasio took a different approach. He studied patients with damage to the ventromedial prefrontal cortex, a region of the brain located just above the eyes. Remarkably, his patients scored perfectly on standard intelligence tests. Yet, despite their cognitive strengths, they struggled to navigate uncertainty and real-world scenarios.

This puzzling observation led Damasio to propose his hypothesis of somatic markers. According to Damasio, human intelligence is deeply intertwined with emotions. As we grow—from childhood through adulthood—our minds develop somatic markers: feelings that arise throughout the body and guide our decision-making by framing specific thoughts and experiences.

But what does this actually mean? The idea of somatic markers can feel abstract at first because we often think of decision-making as a purely mental process. Yet, the connection between the mind and body becomes obvious in moments of high stakes. Have you ever faced an incredibly difficult decision and noticed your body reacting before you made the choice? Perhaps you felt a twinge in your stomach, your palms grew sweaty, or the hairs on your arms stood on end. These bodily sensations are part of the well-known fight-or-flight response—a mechanism that prepares us to face danger or make life-altering decisions.

Damasio’s research reveals that these responses are not just primitive reflexes. Instead, they are deeply connected to how we handle uncertainty, providing an emotional framework for choices that could shape our future. Whether you’re avoiding a dangerous encounter or making a crucial decision about your career, these mechanisms help us navigate the unknown, making them an essential component of human intelligence.

The Human Algorithm: How Mind, Body, and Emotions Co-Create Intelligence

Well, here’s the thing: compared to Artificial Intelligence, human minds don’t come into the world as preloaded software running on predefined hardware. Not at all. Human minds and bodies are co-created, evolving together in an intricate, ever-shifting dance. From the moment we’re born, this connection is tested—hungry cries summon food, and fevers rally both mind and body to fend off invaders. Each challenge reshapes the mind-body system, molding it like clay under fire.

This interplay isn’t mechanical; it’s alive. Your emotions—anger, joy, fear—aren’t just feelings. They ripple through you, a cascade of chemical and electrical signals transforming the architecture of your mind while your body responds in kind. Anger tightens your chest, sharpens your focus, and energizes your muscles. Sadness softens your posture and slows your breath. Together, these emotions create a morphing, adaptive system, where thought and sensation are inseparably fused.

As you grow, this system evolves. What begins as primal urges—crying for sustenance, recoiling from danger—becomes something more complex. Over time, emotions become maps: somatic markers that guide decisions, shape identities, and fuel creativity. When you feel joy, it’s not just a fleeting moment—it’s your body and mind harmonizing to light up new pathways, making you more than you were a moment ago.

But this isn’t the end of the story. The mind eventually learns to simulate—to imagine what it feels like to act without moving a single muscle. This ability to step outside the body, to abstract and project, creates new possibilities. In moments of uncertainty, your mind becomes a shapeshifter, conjuring “as if” worlds to prepare for what lies ahead. Yet even in these moments of pure computation, the echoes of your body linger, shaping the very fabric of your thoughts.

This dynamic, morphing system—the fusion of mind and body—is what allows us to thrive in a world of chaos and unpredictability. Unlike AI, we are not static processors. We are fluid, ever-changing beings, molded by the unison of our physical and mental selves.

From Cells to Selves: How Collective Intelligence Drives Human Decision-Making

You might wonder: what do bodies truly contribute to our intelligence? Are they just vehicles for DNA, implementing genetic instructions without much else? Not exactly. To understand their deeper role, let’s explore the concept of basal cognition. Basal cognition shows us that cognitive processes—such as sensing, evaluating, remembering, and adapting—are not limited to animals with complex brains. Even single-celled organisms like amoebae, slime molds, plants, and bacteria demonstrate behaviors that can be described as cognitive. Cells, despite their simplicity, perform remarkable tasks, and their potential only grows when they work together.

As Michael Levin and Daniel Dennett describe in Cognition All the Way Down:

“The other amazing thing that happens when cells connect their internal signaling networks is that the physiological setpoints that serve as primitive goals in cellular homeostatic loops, and the measurement processes that detect deviations from the correct range, are both scaled up”.

This scaling is fundamental. The collective intelligence of cells forms the basis for higher-order processes in the body, creating a seamless feedback loop between mind and body. The somatic markers hypothesis suggests that these low-level mechanisms are continuously leveraged into our everyday thinking, expanding their influence far beyond their origins. My own intuition is that there are all kinds of competencies in all sorts of spaces being leveraged through emotion into thinking. For instance, the body’s innate knowledge of physical space is crucial for developing “gut” feelings—our intuitive ability to handle uncertainty. This intuition stems from the body’s deep integration with the mind, using information about the physical world to guide decisions. Other examples are extended memory footprint: bodies have incredible bioelectric memory that can reach morphological goals even in the most dire circumstances. These mechanisms act as a booster for continuous working memory and attention.

However, this system is not infallible. As Michael Levin notes, cancer represents a failure of this integration—a localized reversion to an ancient, unicellular state. In cancer, the boundary of the self collapses, with the affected cells treating the body as mere “environment” to exploit: “Cancer, a localized reversion to an ancient, unicellular state in which the boundary of the self is just the surface of a single cell and the rest of the body is just ‘environment’ from its perspective, to be exploited selfishly”.

This failure reminds us of how delicate the balance is between the mind and body. Yet, when the system works in harmony, it can achieve incredible feats. Antonio Damasio emphasizes this interconnectedness in his exploration of the autonomic nervous system: “Blood vessels everywhere, including those in the thick of the most extensive organ in the body, the skin, are innervated by terminals from the autonomic nervous system, and so are the heart, the lung, the gut, the bladder, and the reproductive organs. Even an organ such as the spleen, which is concerned largely with immunity, is innervated by the autonomic nervous system”.

This intricate web of connections ensures that the nervous system and the body are co-dependent, continuously sharing information and adapting to challenges. Through this interplay, emotions—far from being mere feelings—become tools for scaling the competencies of the body into the realm of thought. Positive emotions, for example, fuel complex psychological states like motivation and creativity, while negative emotions warn us of potential threats.

Ultimately, the mind-body connection is a morphing system—an intricate network where low-level agents cooperate, scale their influence, and build the scaffolding for higher cognition. It is through this dynamic integration that we navigate uncertainty, adapt to our environment, and create identities that transcend our physical selves.

Emotional Feedback Loops: Acting Fast, Reflecting Later

So, you’re constantly receiving information about how your body “feels”. What should you do with it? Ideally, you’d take the time to understand it completely—develop hypotheses about why you feel the way you do, test them, refine them, and improve your predictions. But this approach comes with two significant challenges.

First, emotional processing quickly becomes overwhelmingly complex. Have you ever tried tracing the origins of a specific emotional state? Each answer branches into countless possibilities, leading you deeper into a web of interconnected thoughts and memories. By the time you begin to untangle it all, you encounter the second problem: time. The world moves too fast for deep reflection. Game over. The metaphorical lion has already caught you, leaving you no choice but to act instinctively to survive. This is precisely the mechanism Ben Goertzel describes in The Hidden Pattern:

“What causes [emotional] mental states to register in the brain’s virtual multiverse model in a delayed way? And why is that even remotely useful? Maybe because it makes the agent act rapidly to overcome uncertainty while marking, as accurately as possible, ‘branching’ points in decision space”.

In real-time, your mind is blocked from fully understanding the reasons behind the unfolding images, forcing you to act like an anticipatory machine. You prioritize rapid action over analysis, leveraging the mind-body dynamic system to manage uncertainty. Yet, emotion doesn’t disappear after the moment has passed. It leaves behind memory markers—deep imprints in your mind that draw you back to the event long after it’s over. These markers allow for retrospective analysis and refinement, helping you revise and improve your understanding for the future.

This process demonstrates the remarkable adaptability of the mind-body connection. Even when immediate understanding is sacrificed, the system captures enough information to make sense of it later. In this way, emotions aren’t just fleeting signals; they’re tools for navigating complexity and uncertainty, creating a feedback loop that shapes how we think, feel, and act.

Engineering Intelligence: How AI is Built on Statistics and Learns to Think

Now that we have looked over some of the most fundamental features of human intelligence, let’s delve into artificial intelligence and see how it works and try to compare these two systems. Artificial intelligence, as we currently know it, builds its intelligence by compressing large amounts of data into patterns it can learn from. Simply put, AI identifies statistical relationships using intensive computation as its foundation. To introduce creativity, the system adds randomness during its decoding phase. While this might not seem like much, it’s a groundbreaking concept: by “hiding” the next token, the model must deeply “understand” the context—everything that came before— to predict what comes next. On the most fundamental level, the model has to understand reality in order to predict it.

However, this method has its limits. For one, human language can never fully describe the complexities of reality. And second, the amount of meaningful text about reality is finite. To move beyond these limitations, researchers have explored a new frontier for enhancing intelligence: teaching AI to think. This is akin to what Daniel Kahneman describes as System 2 in Thinking, Fast and Slow—a deliberate, reflective mode of thought.

How does this work? While the idea of reflective AI isn’t new, its recent implementations and scaling are. These silicon-based systems are now capable of metacognition—thinking about their own thinking. Though the exact methods remain unclear, we know test-time computation plays a pivotal role in enabling AI to refine its thoughts in two primary ways:

- Self-refinement: AI systems can simulate possible scenarios to iteratively improve their reasoning. For instance, when faced with a complex problem, the system generates a potential solution, evaluates its effectiveness, and adjusts its approach based on detected errors or inconsistencies. By refining its internal representations in real time, the system builds increasingly accurate models of the problem space, uncovering mistakes and optimizing its reasoning process before committing to a final answer.

- Tree of thoughts with rewards: This method involves using if-then reasoning to explore multiple possible solutions. If a solution works well, the system assigns it a reward, reinforcing that approach for future use.

Through these mechanisms, AI can develop a structured, step-by-step plan for tackling problems—a metacognitive strategy that allows it to outperform benchmarks like ARC-AGI. Yet, even with these advancements, limitations remain. My suspicion is that this system will struggle in situations of heightened uncertainty, where only creativity, intuition, and emotion can navigate the unknown.

The challenge ahead lies in integrating these human-like traits into AI. While metacognition is a critical step forward, the path to overcoming ontological uncertainty requires a fusion of reasoning and imagination—a task that may redefine what it means for machines to think.

From Intuition to Creativity: How AI Will Evolve Beyond Problem-Solving

Building on how AI systems like ChatGPT operate today, my prediction for their evolution is as follows: while current systems rely on statistical pattern recognition and metacognition to solve problems, the next step involves advancing beyond System 2 thinking (as described in Thinking, Fast and Slow). These systems will need to develop a deeper adaptability, enabling them to rapidly model their environments and make intelligent decisions with minimal data.

This evolution builds on concepts like test-time computing and self-refinement, where AI systems iteratively evaluate and improve their reasoning. By extending these mechanisms, future AI will operate more intuitively, much like human intuition, which excels at making decisions in uncertain or data-scarce scenarios. For example, just as a human can quickly grasp the tone of a conversation or anticipate another’s intentions, AI will become adept at understanding its users, reducing ambiguity, and adapting its responses dynamically. This will enhance communication and make these systems more practical in real-world applications.

A critical enabler of this shift will be the integration of multi-modal data—text, video, audio, and more—into AI training and inference. As systems learn to process and combine these diverse inputs, their understanding of the world will expand, paving the way for greater creativity. Generative models, such as variational autoencoders (VAEs) and GANs, are already hinting at this potential. However, in the future, these models won’t just generate creative outputs; they’ll pose new and meaningful questions, helping us explore problems we haven’t yet considered.

As these systems mature, the focus will inevitably shift to autonomous agents capable of generating their own goals. This represents a significant turning point. How can we ensure that self-running systems not only solve problems and innovate but do so in alignment with human values? Ensuring this alignment will require embedding principles of creativity, intuition, and emotional reasoning into these agents. Drawing from the earlier discussion on AI’s limitations under ontological uncertainty, the challenge lies in designing systems that navigate the unknown with sensitivity to both human intent and the broader impact of their actions.

Ultimately, this trajectory mirrors the broader progression of AI from static pattern recognition to dynamic, self-directed reasoning. It begins with leveraging compression and statistical modeling, evolves through metacognition and test-time adaptation, and culminates in systems that create, innovate, and align their goals with ours. The question is not just how far AI will go, but how thoughtfully we guide its development to ensure it remains a tool for understanding and bettering the world.

Beyond Humanity and AI: The Fusion of Minds and Machines

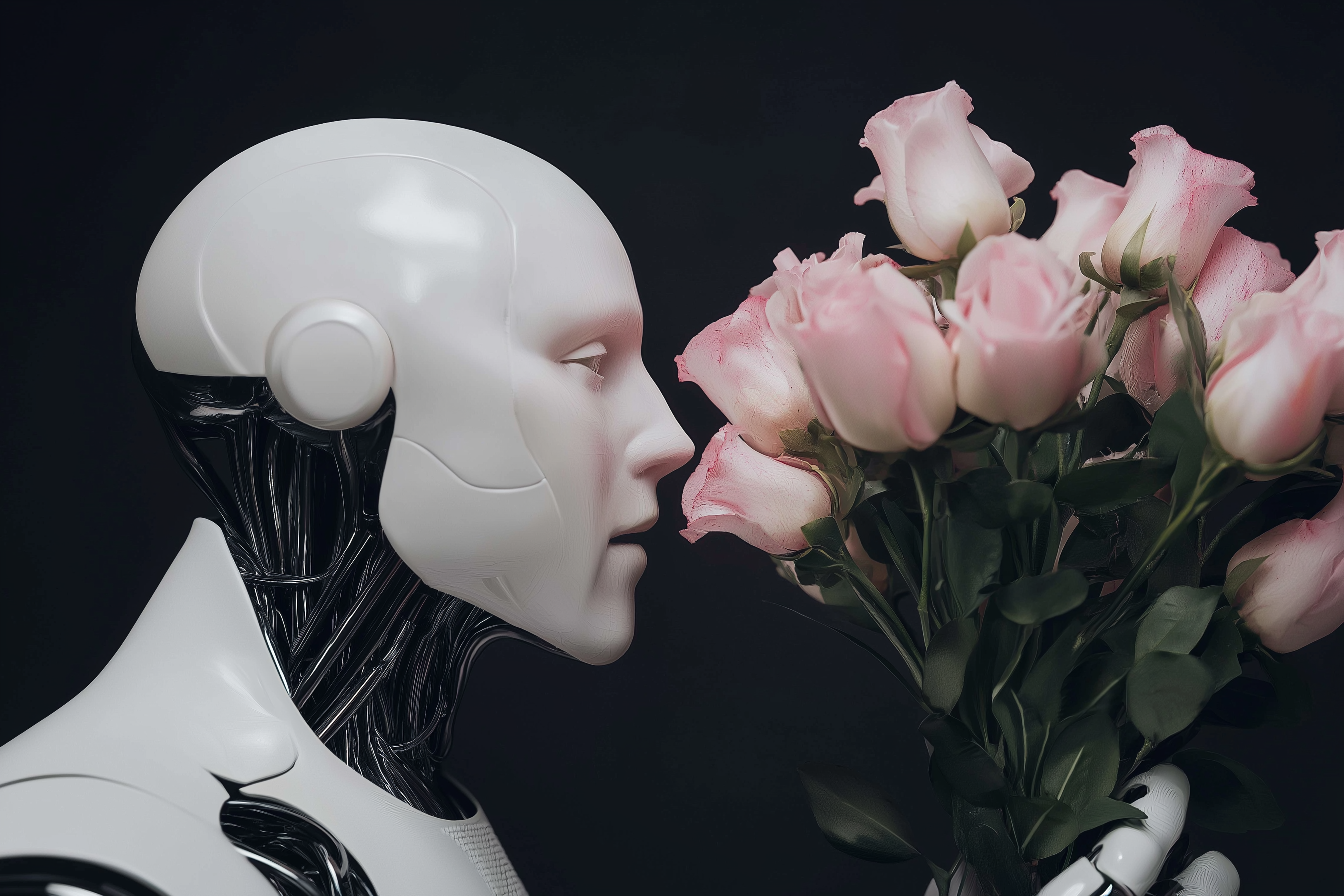

There is still an unanswered question: how about emotion? Will future AI need both emotion and embodiment to think properly? While the answer is still debated, I believe that achieving human-level reasoning will require both—working together as systems where hardware and software co-evolve, continuously adapting for survival and complexity.

But there’s another side to this story: while AI learns to become more human-like, humans are simultaneously merging with technology. Much of human life is now spent in front of computers. As technology evolves—from the printing press to brain-machine interfaces—we see it becoming an extension of our identity and sense of self. Neuralink, for example, envisions a two-way communication system with computers, enabling us to act on them with zero latency and receive input directly into our brains. Such advancements could transform us into super-intelligent cyborgs, where emotion, creativity, and cognition operate seamlessly across biological and digital domains.

This integration isn’t limited to brain-machine interfaces. Imagine a world where adapters translate neural signals into latent space vectors, enabling us to generate images, music, or even fully formed ideas using only our thoughts, we could even communicate with each other directly using this interface, skipping language entirely and provide more accurate representations of our brain states. Early versions of such systems already exist, allowing us to generate artistic representations directly from brain activity. Meanwhile, at a more fundamental level, computers are designing synthetic life forms—like xenobots—that blur the line between biology and robotics. These entities are neither robots nor organisms but something entirely new, signaling the emergence of hybrid intelligences.

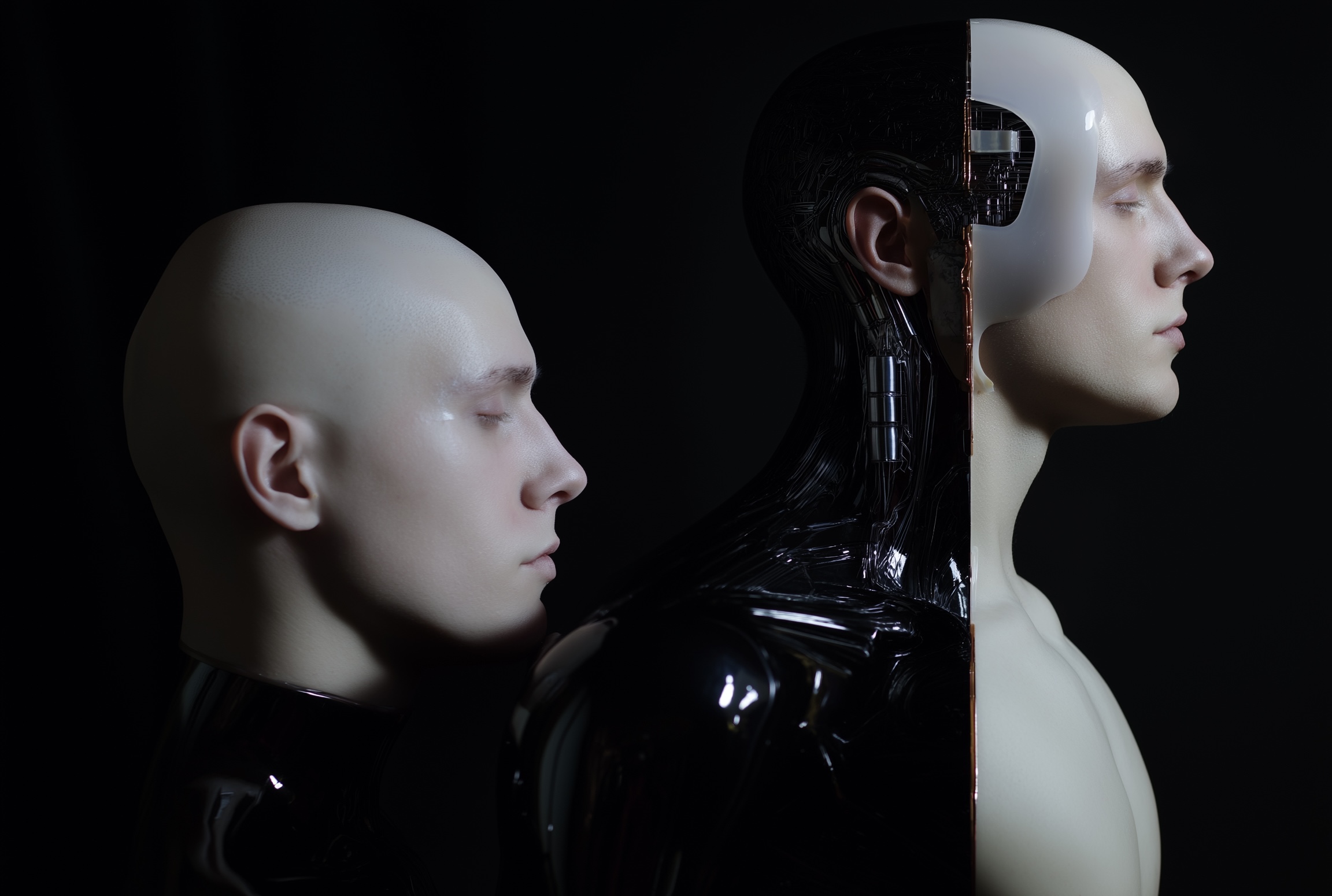

As these technologies advance, we’re moving toward a convergence of two stacks: carbon-based and silicon-based intelligence. On one side, humanity integrates ever more deeply with computers and AI. On the other, AI and robots grow bodies, develop emotions, and learn to think like humans. This merging is leading us toward the creation of a new species—a post-human entity capable of fostering diverse forms of intelligence. This entity could encompass biological minds, computer-based systems, prior-biological intelligences running on silicon, embodied hybrids, and even distributed swarms of hive minds.

What will such a future look like? I believe it holds infinite possibilities. A post-human intelligence will likely possess not only cognitive empathy but also the ability to reconfigure itself for different types of tasks, thinking styles, and emotional states. It will be capable of fostering all kinds of minds, creating a world rich in diversity and creativity. As Michael Levin suggests in his essay AI Could Be a Bridge Toward Diverse Intelligence, this evolution might help us understand intelligence in ways that transcend our current biological limitations, paving the way for a future that’s as ethically complex as it is intellectually profound.

Subscribe to CogitoMachina

You’ll periodically receive articles about futuristic ideas related to artificial intelligence, the human mind, neuroscience, and consciousness.